Published on

April 3, 2026

.png)

ChatGPT is a remarkable tool. It can draft emails, summarize documents, explain complex concepts in plain language, and answer an enormous range of questions in seconds. It is easy to see why government employees have reached for it. According to FedScoop, more than 90,000 users across 3,500 federal, state, and local government agencies had already sent over 18 million messages through ChatGPT by 2025. The adoption is real, and the curiosity driving it is completely understandable.

But curiosity and caution need to travel together. ChatGPT is a general-purpose tool built for a general audience. Municipal government work is anything but general. It depends on specificity: your city's zoning code, your department's current policies, your budget documents, your adopted ordinances. When that specificity is missing, the gap between a confident AI answer and an accurate one can cause real problems for staff and residents alike.

This article explains exactly where ChatGPT falls short for government staff, and what a purpose-built government AI tool does differently.

ChatGPT is not designed to be accurate in the way government work requires. It is designed to produce fluent, plausible responses based on patterns in its training data. Those are not the same thing, and the difference matters enormously when a planning technician needs the correct setback requirement for a specific zoning district, or a permit coordinator needs to know exactly which inspection steps apply to a commercial renovation.

The research on AI hallucinations is sobering. A peer-reviewed study published in the Journal of Medical Internet Research found hallucination rates of 28.6% for GPT-4 on systematic reference generation tasks. The HaluEval benchmark found that ChatGPT-4 produced inaccurate, nonsensical, or unverifiable responses in 19.5% of cases overall. On complex factual reasoning tasks, hallucination rates for OpenAI's o3 series reached 33 to 51%. These figures are not edge cases. They reflect a structural characteristic of how large language models work.

For city staff, the stakes of an inaccurate answer are meaningful. A resident who receives incorrect information about permit fees or zoning rules may begin a project based on that guidance, only to find out it was wrong when they submit their application. The error originated with the AI, but the city staff member who used it is accountable for the outcome. ChatGPT does not take responsibility for its answers. Your team does.

ChatGPT does not have access to your city's adopted municipal code, your zoning ordinances, your current fee schedule, your most recent budget amendments, or the meeting minutes from last Tuesday's city council session. It was trained on a broad dataset of publicly available internet text with a knowledge cutoff date. Whatever your city published after that date does not exist in ChatGPT's knowledge base, and even content that predates the cutoff may not have been included in training.

This is not a small gap. Municipal codes are updated regularly. Zoning maps change. Fees are adjusted. Policies evolve. A general AI tool gives you a response drawn from general knowledge about how municipal governments typically work, not knowledge of how your government works. That distinction is the difference between useful guidance and a liability.

Consider a scenario familiar to any planning department: a resident calls to ask whether their property is eligible for an accessory dwelling unit. The correct answer depends on the specific zoning district, lot size minimums, setback requirements, and any overlay conditions that apply to that parcel. ChatGPT may produce a thorough-sounding answer about ADU rules in general. But only a tool grounded in your city's current code can give an answer that is actually reliable. For city planners fielding questions like this daily, that difference in reliability matters with every interaction.

The default version of ChatGPT is a consumer product. When a government employee types a question into it, that input travels to OpenAI's servers. Depending on the user's account settings, that content may be used to train future versions of the model. Most users do not read the terms closely enough to know how their data is handled, and many do not change the default settings.

The risks of this arrangement have already materialized in government settings. In a widely covered incident, a senior U.S. cybersecurity official uploaded documents marked "for official use only" to the public version of ChatGPT. The documents contained contracting information that was not intended for public release. This was not a junior employee making a casual error. It was a senior official, under time pressure, reaching for a familiar tool without stopping to consider where the data was going. It is a scenario that plays out in organizations at every level.

For local government, the categories of sensitive information that staff handle daily are significant. Resident case files, personnel records, legal correspondence, budget details, and code enforcement histories are all the kinds of content that should not be flowing into a general-purpose commercial AI platform. For city IT departments responsible for data governance, the question is not just whether ChatGPT is useful. It is whether its use can be governed, audited, and controlled in a way that meets the city's obligations to its residents and its employees.

Shadow AI refers to employees using AI tools that have not been reviewed, approved, or monitored by their organization's IT or leadership teams. It is the AI equivalent of shadow IT: unofficial tools filling a gap that official tools have not addressed. And according to recent research, it is widespread. A 2025 analysis by ISACA found that more than 80% of workers use unapproved AI tools in their jobs, including nearly 90% of security professionals. Among senior executives, the rate rises to 93%.

The financial exposure from shadow AI is measurable. Research cited in the Journal of Accountancy found that the average cost of a data breach is $670,000 higher when it involves shadow AI compared to a sanctioned tool. Three-quarters of employees using shadow AI admitted to sharing potentially sensitive information with unapproved tools, most commonly employee data, customer data, and internal documents.

For city governments, shadow AI represents an invisible compliance risk. Staff are not using these tools maliciously. They are trying to work more efficiently, and the impulse is a good one. But without visibility into which tools are being used and what data is being shared, city leadership cannot manage the risk. Providing staff with a vetted, purpose-built AI tool is one of the most effective ways to address the shadow AI problem: when the right tool is available and easy to use, the incentive to reach for an unsanctioned one disappears. This is a conversation worth having at the leadership level, and mayors and city managers evaluating AI adoption are increasingly making it a priority.

OpenAI has acknowledged that the consumer version of ChatGPT is not appropriate for government use. In January 2025, the company launched ChatGPT Gov, a version designed for federal agencies that allows organizations to host the model within their own secure cloud environment. It addresses several of the data privacy concerns that make consumer ChatGPT unsuitable for government work.

That is a meaningful improvement, and it deserves acknowledgment. But even ChatGPT Gov does not resolve the core accuracy problem for local government staff. Hosting ChatGPT inside a government cloud does not teach it your city's zoning code. It does not give it access to your adopted ordinances, your fee schedules, or your department procedures. It is still a general-purpose model that draws on broad training data rather than the specific, verified documents that define how your city operates.

A useful analogy: giving a highly capable new employee access to your secure office building does not mean they know where your files are or what your policies say. They still need to be connected to the right sources of information before their expertise becomes useful. The same principle applies to AI. Secure access is necessary, but it is not sufficient. The model also needs to be connected to the right data, with the right guardrails, to serve government staff reliably.

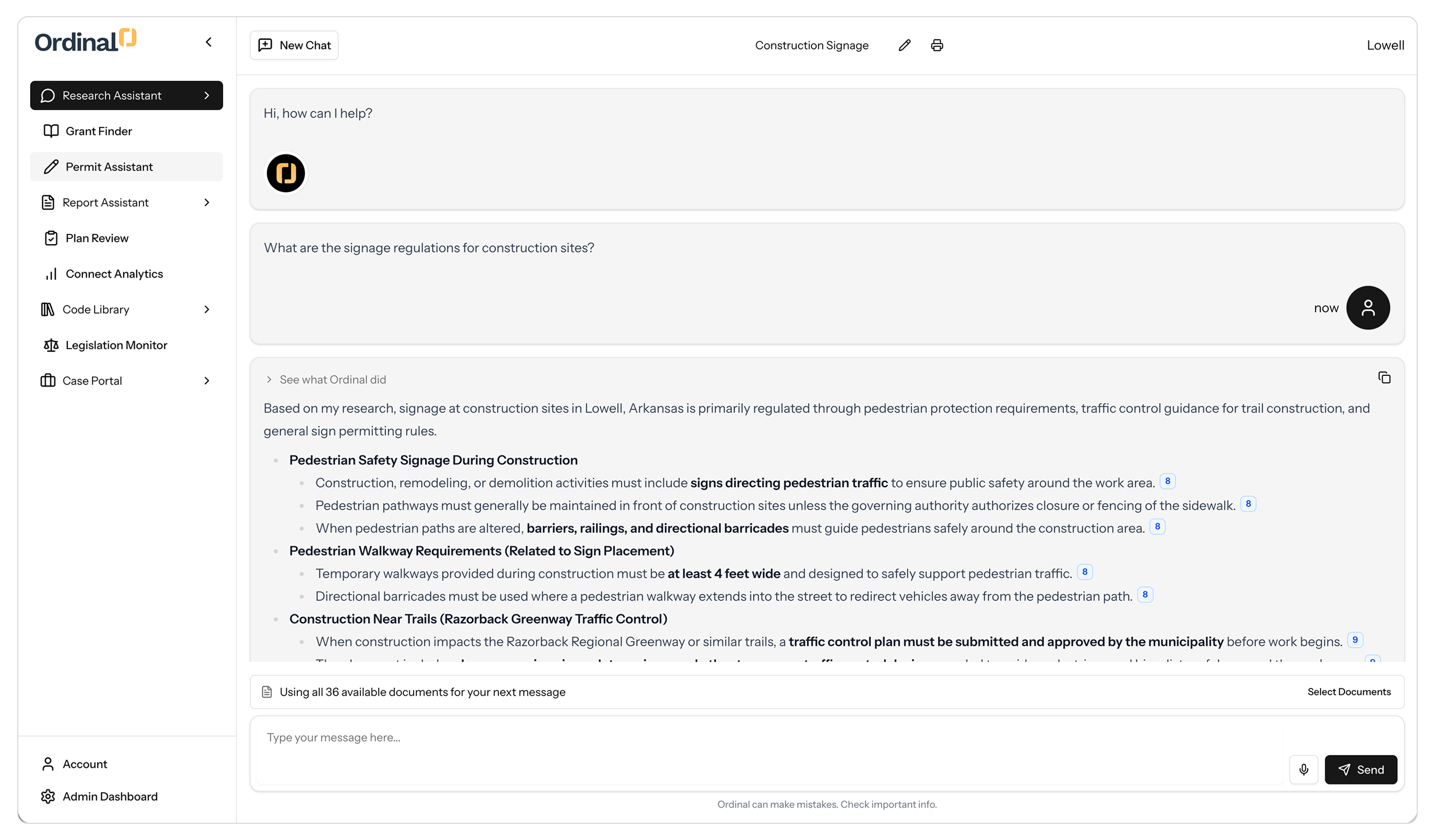

A purpose-built government AI tool is designed around the premise that accuracy requires specificity. Rather than drawing on broad internet training data, it uses retrieval-augmented generation (RAG) to search a curated library of your city's own documents: municipal codes, zoning ordinances, adopted policies, meeting minutes, and department procedures. Every answer it produces is grounded in documents your city has reviewed and approved, and every answer includes a reference to the source so staff can verify the response directly.

The practical difference shows up immediately. When a staff member asks about the required setback for a residential addition in a specific zoning district, a purpose-built tool searches your current code and returns the precise requirement, with a citation. A general AI tool returns a reasonable-sounding answer based on how zoning setbacks typically work, which may or may not apply to your city. One answer is useful. The other requires verification before it can be acted on, which largely defeats the purpose of asking.

Purpose-built tools also reduce the onboarding burden for new staff. According to Ordinal's own research with municipal partners, employees in some municipalities spend nearly 40% of their workweek on information retrieval alone. A tool grounded in your city's documents means new planners, clerks, and coordinators can find accurate answers independently from their first week on the job, without pulling a senior colleague away from active work. A good resource for understanding how modernizing your document infrastructure supports this kind of readiness is how cities are approaching municipal code upgrades. The quality of your AI tool's outputs is directly tied to the quality and organization of the documents it draws from.

ChatGPT is a capable, well-designed tool for general purposes. Writing, summarizing, brainstorming, drafting: these are tasks it handles well, and government employees benefit from using it for that kind of work in the right context, with the right safeguards in place.

But government work also requires accuracy grounded in your specific policies, data privacy practices that meet public-sector obligations, and auditability that lets leadership understand how AI is being used across the organization. For those requirements, a general-purpose tool is not the right fit. The good news is that purpose-built alternatives exist, are designed for cities of every size, and are already delivering results in municipalities across the country.

The question for city leadership is not whether AI belongs in local government. It clearly does. The question is whether the AI tools your staff are using were built for the work your city actually does.

FedScoop: How Risky Is ChatGPT? Depends Which Federal Agency You Ask

JMIR / PMC: Hallucination Rates and Reference Accuracy of ChatGPT for Systematic Reviews

Scott Graffius: Are AI Hallucinations Getting Better or Worse? (2026 Analysis)

Visual Capitalist: Ranked: AI Hallucination Rates by Model

CSO Online: CISA Chief Uploaded Sensitive Government Files to Public ChatGPT

OpenAI: Introducing ChatGPT Gov

ISACA: The Rise of Shadow AI: Auditing Unauthorized AI Tools in the Enterprise (2025)

Journal of Accountancy: Lurking in the Shadows: The Costs of Unapproved AI Tools (2025)

Cybersecurity Dive: Shadow AI Is Widespread and Executives Use It the Most

IBM: Enhancing Regulatory Compliance in the AI Age by Grounding Documents with Generative AI

REI Systems: The Right Tool for the Mission: Big and Small AI in Government

Intelligent Document Processing: Purpose-Built AI vs. General-Purpose AI: Why It Matters

Center for Democracy and Technology: AI Governance Checklist for State and Local Leaders

Ordinal: Raises $1M to Help Municipalities Work Smarter With AI

Ready to see Ordinal in action? Book some time with our team and we’ll show you just how valuable this could be for you and your staff.